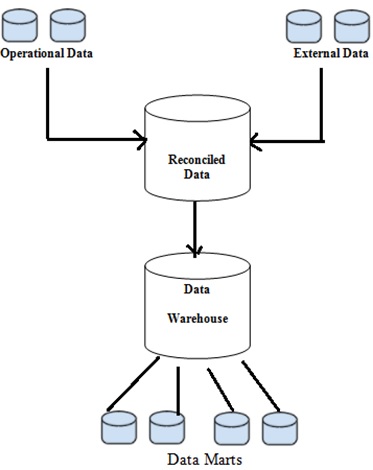

The staging layer stores raw data from different sources, such as transactional systems, relational databases, and other sources. Data warehouses use both the data staging and core layers for batch processing. Require ETL pipelines to transform the data before its use. Support of structured data, with limited support of semi-structured data. Upstreaming to their raw origin is difficult as it’s not accesible to end users. Data warehouse often provides data analysis and visualization tools with multiple features to aid non-technical users access data easily. Let’s review data warehouses by our selected indicators:Īllows end users to easily access all the processed data on a centralized database through SQL. Additionally, subsets of the data warehouse, called data marts, can be provided to answer specialized analytical needs. They minimize input and output (I/O), allowing query results to be delivered faster and to multiple users simultaneously.

Finally, the access layer lets users retrieve the translated and organized data through SQL queries.ĭata warehouse powers reports, dashboards, and analysis tools by storing data efficiently.

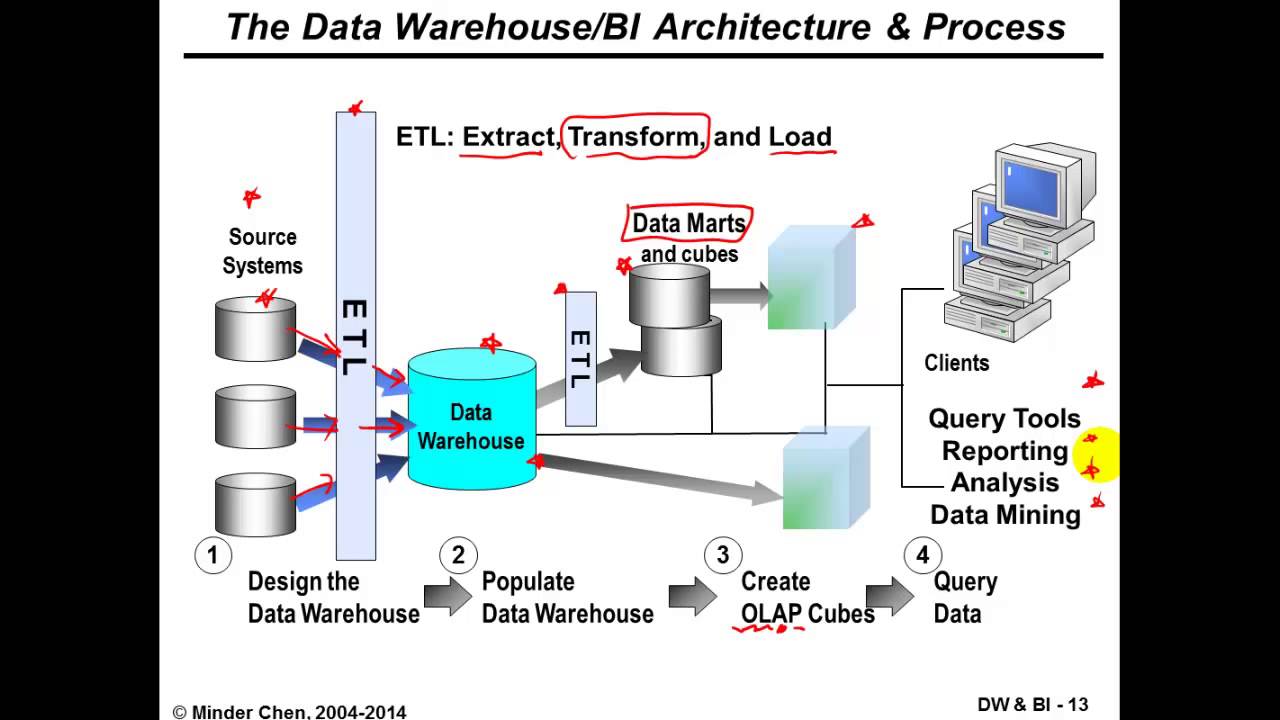

Then data schema-on-write is used to optimize the data model for downstream BI consumption. The first layer sees raw data format transition to a fully transformed set. The data warehouse is considered as the primary source of truth on business operations.Ī standard data warehouse architecture (image above) uses Extract, Transform and Load (ETL) for data transit through three different layers, data staging, data core, and data access. The main motivation for the emergence of data warehouses relied on solving the inconsistent data of RDBMS by transforming data from operational systems to analytical-processing support systems. Their focus is to provide readily available data for advanced querying and analysis. Which usecases the architecture allows for.Ī data warehouse is a centralized system designed to store present and historical data. Knowledge of the data origin, how it’s modified and where it moves over time, enabling to trace errors to it’s root cause.Ībility to set procedures which ensure critical data is formally and securily managed through the company.Ībility to maintain availability and behavior when more resources are demanded.Ībility to update and delete obsolete data.Įfficiency to handle multiple queries concurrently, both in term of throughput and latency.ĭata consistency and accuracy, we will also account for availability. To be more comprehensive, we pre-selected a set of common concerns.Ībility to democratize data by allowing both technical and non-technical users to access crucial data if needed. There are multiple indicators to consider when selecting a database architecture. In this landscape we find a new architecture emerge: the Data Lakehouse, which tries to combine the key benefits of both competing architectures, offering low-cost storage accessible by multiple data processing engines such as Apache Spark, raw access to the data, data manipulation, and extra flexibility. This is why we can find modern data lake and data warehouse ecosystems converging, both getting inspiration, borrowing concepts, and addressing use cases from each other. In addition, data is considered immutable, which leads to additional integration efforts. On the other side of the pitch, data lakes enable high throughput and low latency, but they have issues with data governance leading to unmanageable “data swamps”. However, they lack on affordable scalability for petabytes of data. For instance, data warehouses allow for high-performance Business Analytics and fine grained data governance. To this day both solutions remain popular depending on different business needs. Later in the early 2000s Data Lakes appeared, thanks to innovations in cloud computing and storage, enabling to save an exorbitant amounts of data in different formats for future analysis. From the three database structures we are comparing, the first one to appear was the Data Warehouses, introduced in the 80’s with the support of Online Analytical Processing (OLAP) systems, helping organizations face the rise of diverse applications in the 90’s by centralizing and supporting historical data to gain competitive business analytics. Database architectures have experienced constant innovation, evolving with the appearence of new use cases, technical constraints, and requirements.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed